162:

4134:

1176:

838:

283:, who had been developing inverse probability methods, had his own questions about the validity of the process. While fiducial inference was developed in the early twentieth century, the late twentieth century believed that the method was inferior to the frequentist and Bayesian approaches but held an important place in historical context for statistical inference. However, modern-day approaches have generalized the fiducial interval into Generalized Fiducial Inference (GFI), which can be used to estimate discrete and continuous data sets.

4120:

1129:

interval estimates can be formulated. In this regard confidence intervals and credible intervals have a similar standing but there two differences. First, credible intervals can readily deal with prior information, while confidence intervals cannot. Secondly, confidence intervals are more flexible and can be used practically in more situations than credible intervals: one area where credible intervals suffer in comparison is in dealing with

4158:

4146:

1137:

makes this is straightforward in the case of confidence intervals, but it is somewhat more problematic for credible intervals where prior information needs to be taken properly into account. Checking of credible intervals can be done for situations representing no-prior-information but the check involves checking the long-run frequency properties of the procedures.

1164:

guidelines towards using them. In manufacturing, it is also common to find interval estimates estimating a product life, or to evaluate the tolerances of a product. Meeker and

Escobar (1998) present methods to analyze reliability data under parametric and nonparametric estimation, including the prediction of future, random variables (prediction intervals).

122:. A confidence interval states there is a 100γ% confidence that the parameter of interest is within a lower and upper bound. A common misconception of confidence intervals is 100γ% of the data set fits within or above/below the bounds, this is referred to as a tolerance interval, which is discussed below.

1121:. After experimenting, a typical first step in creating interval estimates is plotting using various graphical methods. From this, one can determine the distribution of samples from the data set. Producing interval boundaries with incorrect assumptions based on distribution makes a prediction faulty.

294:

use collected data set population to obtain an interval, within tolerance limits, containing 100γ% values. Examples typically used to describe tolerance intervals include manufacturing. In this context, a percentage of an existing product set is evaluated to ensure that a percentage of the population

1163:

Applications of confidence intervals are used to solve a variety of problems dealing with uncertainty. Katz (1975) proposes various challenges and benefits for utilizing interval estimates in legal proceedings. For use in medical research, Altmen (1990) discusses the use of confidence intervals and

1136:

There should be ways of testing the performance of interval estimation procedures. This arises because many such procedures involve approximations of various kinds and there is a need to check that the actual performance of a procedure is close to what is claimed. The use of stochastic simulations

964:

Differentiating from the two-sided interval, the one-sided interval utilizes a level of confidence, γ, to construct a minimum or maximum bound which predicts the parameter of interest to γ*100% probability. Typically, a one-sided interval is required when the estimate's minimum or maximum bound is

259:

Utilizes the principles of a likelihood function to estimate the parameter of interest. Utilizing the likelihood-based method, confidence intervals can be found for exponential, Weibull, and lognormal means. Additionally, likelihood-based approaches can give confidence intervals for the standard

1128:

In commonly occurring situations there should be sets of standard procedures that can be used, subject to the checking and validity of any required assumptions. This applies for both confidence intervals and credible intervals. However, in more novel situations there should be guidance on how

899:). Examples may include estimating the average height of males in a geographic region or lengths of a particular desk made by a manufacturer. These cases tend to estimate the central value of a parameter. Typically, this is presented in a form similar to the equation below.

237:

While a prior assumption is helpful towards providing more data towards building an interval, it removes the objectivity of a confidence interval. A prior will be used to inform a posterior, if unchallenged this prior can lead to incorrect predictions.

268:

Fiducial inference utilizes a data set, carefully removes the noise and recovers a distribution estimator, Generalized

Fiducial Distribution (GFD). Without the use of Bayes' Theorem, there is no assumption of a prior, much like confidence intervals.

663:

1116:

When determining the significance of a parameter, it is best to understand the data and its collection methods. Before collecting data, an experiment should be planned such that the uncertainty of the data is sample variability, as opposed to a

241:

The credible interval's bounds are variable, unlike the confidence interval. There are multiple methods to determine where the correct upper and lower limits should be located. Common techniques to adjust the bounds of the interval include

233:

1124:

When interval estimates are reported, they should have a commonly held interpretation within and beyond the scientific community. Interval estimates derived from fuzzy logic have much more application-specific meanings.

1151:, which is a common approach to and justification for Bayesian statistics, interval estimation is not of direct interest. The outcome is a decision, not an interval estimate, and thus Bayesian decision theorists use a

1044:) will increase. Likewise, when concerned with finding only an upper bound of a parameter's estimate, the upper bound will decrease. A one-sided interval is a commonly found in material production's

960:

810:

contexts. These intervals are typically used in regression data sets, but prediction intervals are not used for extrapolation beyond the previous data's experimentally controlled parameters.

1815:

and has discussion comparing the three approaches. Note that this work predates modern computationally intensive methodologies. In addition, Chapter 21 discusses the

Behrens–Fisher problem.

965:

not of interest. When concerned about the minimum predicted value of Θ, one is no longer required to find an upper bounds of the estimate, leading to a form reduced form of the two-sided.

391:

1013:

711:

498:

448:

560:

788:

821:

is used to handle decision-making in a non-binary fashion for artificial intelligence, medical decisions, and other fields. In general, it takes inputs, maps them through

129:, one uses the z-table to create an interval where a confidence level of 100γ% can be obtained centered around the sample mean from a data set of n measurements, . For a

825:, and produces an output decision. This process involves fuzzification, fuzzy logic rule evaluation, and defuzzification. When looking at fuzzy logic rule evaluation,

295:

is included within tolerance limits. When creating tolerance intervals, the bounds can be written in terms of an upper and lower tolerance limit, utilizing the sample

751:

1100:

1073:

1042:

897:

870:

553:

526:

731:

317:

125:

There are multiple methods used to build a confidence interval, the correct choice depends on the data being analyzed. For a normal distribution with a known

194:

1075:) with some confidence (100γ%). In this case, the manufacturer is not concerned with producing a product that is too strong, there is no upper-bound (

829:

convert our non-binary input information into tangible variables. These membership functions are essential to predict the uncertainty of the system.

1140:

Severini discusses conditions under which credible intervals and confidence intervals will produce similar results, and also discusses both the

3255:

1837:

3760:

3910:

3534:

2175:

1482:

Hannig, Jan; Iyer, Hari; Lai, Randy C. S.; Lee, Thomas C. M. (2016-07-02). "Generalized

Fiducial Inference: A Review and New Results".

260:

deviation. It is also possible to create a prediction interval by combining the likelihood function and the future random variable.

3308:

3747:

138:

134:

1781:

1756:

1466:

1384:

1155:: they minimize expected loss of a loss function with respect to the entire posterior distribution, not a specific interval.

2170:

1870:

904:

2774:

1922:

243:

3557:

3449:

826:

802:

estimates the interval containing future samples with some confidence, γ. Prediction intervals can be used for both

4162:

3735:

3609:

1776:. Wiley series in probability and statistics Applied probability and statistics section. New York Weinheim: Wiley.

1111:

328:

3793:

3454:

3199:

2570:

2160:

970:

672:

4184:

3844:

3056:

2863:

2752:

2710:

1237:

This has played an important role in the development of the theory behind applicable statistical methodologies.

2784:

4087:

3046:

1949:

399:

And in the case of one-sided intervals where the tolerance is required only above or below a critical value,

1838:

https://web.archive.org/web/20061205114153/http://blog.peltarion.com/2006/10/25/fuzzy-math-part-1-the-theory

3638:

3587:

3572:

3562:

3431:

3303:

3270:

3096:

3051:

2881:

114:

Confidence intervals are used to estimate the parameter of interest from a sampled data set, commonly the

4150:

3982:

3783:

3707:

3008:

2762:

2431:

1895:

1329:

Severini, Thomas A. (1991). "On the

Relationship between Bayesian and Non-Bayesian Interval Estimates".

3867:

3839:

3834:

3582:

3341:

3247:

3227:

3135:

2846:

2664:

2147:

2019:

1264:

Philosophical

Transactions of the Royal Society of London. Series A, Mathematical and Physical Sciences

1234:

845:

Two-sided intervals estimate a parameter of interest, Θ, with a level of confidence, γ, using a lower (

658:{\displaystyle k_{2}=z_{\alpha /2}{\sqrt {\frac {\nu (1+{\frac {1}{N}})}{\chi _{1-\alpha ,\nu }^{2}}}}}

528:

varies by distribution and the number of sides, i, in the interval estimate. In a normal distribution,

454:

404:

150:

3599:

3367:

3088:

3013:

2942:

2871:

2791:

2779:

2649:

2637:

2630:

2338:

2059:

1811:

In the above

Chapter 20 covers confidence intervals, while Chapter 21 covers fiducial intervals and

1748:

Statistics with confidence: confidence intervals and statistical guidelines; [includes disk]

185:

the parameter of interest is included, as opposed to the confidence interval where one can be 100γ%

4082:

3849:

3712:

3397:

3362:

3326:

3111:

2553:

2462:

2421:

2333:

2024:

1863:

1224:

1130:

758:

142:

841:

Differentiating between two-sided and one-sided intervals on a standard normal distribution curve.

3991:

3604:

3544:

3481:

3119:

3103:

2841:

2703:

2693:

2543:

2457:

1189:

807:

4029:

3959:

3752:

3689:

3444:

3331:

2328:

2225:

2132:

2011:

1910:

1209:

37:

1400:

Hespanhol, Luiz; Vallio, Caio Sain; Costa, Lucíola

Menezes; Saragiotto, Bruno T (2019-07-01).

1048:, where an expected value of a material's strength, Θ, must be above a certain minimum value (

4054:

3996:

3939:

3765:

3658:

3567:

3293:

3177:

3036:

3028:

2918:

2910:

2725:

2621:

2599:

2558:

2523:

2490:

2436:

2411:

2366:

2305:

2265:

2067:

1890:

1204:

1194:

736:

276:

178:

130:

42:

1260:"Outline of a Theory of Statistical Estimation Based on the Classical Theory of Probability"

1144:

of credible intervals and the posterior probabilities associated with confidence intervals.

3977:

3552:

3501:

3477:

3439:

3357:

3336:

3288:

3167:

3145:

3114:

3023:

2900:

2851:

2769:

2742:

2698:

2654:

2416:

2192:

2072:

1271:

1229:

1219:

1199:

1141:

1078:

1051:

1020:

875:

848:

822:

531:

504:

146:

1635:

1402:"Understanding and interpreting confidence and credible intervals around effect estimates"

716:

302:

8:

4124:

4049:

3972:

3653:

3417:

3410:

3372:

3280:

3260:

3232:

2965:

2831:

2826:

2816:

2626:

2587:

2477:

2467:

2376:

2155:

2111:

2029:

1954:

1856:

1017:

As a result of removing the upper bound and maintaining the confidence, the lower-bound (

803:

799:

161:

93:

74:

54:

28:

1275:

4138:

3949:

3803:

3699:

3648:

3524:

3421:

3405:

3382:

3159:

2893:

2876:

2836:

2747:

2642:

2604:

2575:

2535:

2495:

2441:

2358:

2044:

2039:

1434:

1401:

1342:

1305:

1297:

1181:

320:

291:

272:

119:

86:

837:

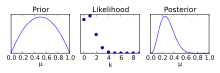

228:{\displaystyle {\text{Posterior}}\ \propto \ {\text{Likelihood}}\times {\text{Prior}}}

165:

Bayesian

Distribution: Adjusting a prior distribution to form a posterior probability.

4133:

4044:

4014:

4006:

3826:

3817:

3742:

3673:

3529:

3514:

3489:

3377:

3318:

3184:

3172:

2798:

2715:

2659:

2582:

2426:

2348:

2127:

2001:

1812:

1777:

1752:

1727:

1688:

1657:

1652:

1616:

1577:

1538:

1499:

1462:

1439:

1421:

1380:

1346:

1289:

1175:

1118:

1045:

170:

80:

64:

1309:

246:(HPDI), equal-tailed interval, or choosing the center the interval around the mean.

4069:

4024:

3788:

3775:

3668:

3643:

3577:

3509:

3387:

2995:

2888:

2821:

2734:

2681:

2500:

2371:

2165:

2049:

1964:

1931:

1719:

1647:

1608:

1569:

1530:

1491:

1429:

1413:

1372:

1338:

1279:

46:

1708:"Presentation of a Confidence Interval Estimate as Evidence in a Legal Proceeding"

1495:

3986:

3730:

3592:

3519:

3194:

3068:

3041:

3018:

2987:

2614:

2609:

2563:

2293:

1944:

1214:

1148:

69:

3476:

3935:

3930:

2393:

2323:

1969:

1746:

1417:

1843:

4178:

4092:

4059:

3922:

3883:

3694:

3663:

3127:

3081:

2686:

2388:

2215:

1979:

1974:

1731:

1692:

1661:

1620:

1581:

1542:

1503:

1425:

1350:

1293:

280:

4034:

3967:

3944:

3859:

3189:

2485:

2383:

2318:

2260:

2245:

2182:

2137:

1443:

1284:

1255:

1152:

174:

1376:

4077:

4039:

3722:

3623:

3485:

3298:

3265:

2757:

2674:

2669:

2313:

2270:

2250:

2230:

2220:

1989:

818:

98:

59:

2923:

2403:

2103:

2034:

1984:

1959:

1879:

1707:

1676:

1596:

1557:

97:. For a non-statistical method, interval estimates can be deduced from

32:

20:

1519:"Two-Sided Tolerance Limits for Normal Populations, Some Improvements"

3076:

2928:

2548:

2343:

2255:

2240:

2235:

2200:

1634:

Hahn, Gerald J.; Doganaksoy, Necip; Meeker, William Q. (2019-08-01).

1518:

1301:

145:. The Jeffrey method can also be used to approximate intervals for a

1802:

The

Advanced Theory of Statistics. Vol 2: Inference and Relationship

1723:

1612:

1573:

1534:

1367:

Meeker, William Q.; Hahn, Gerald J.; Escobar, Luis A. (2017-03-27).

1259:

169:

As opposed to a confidence interval, a credible interval requires a

2592:

2210:

2087:

2082:

2077:

1681:

Journal of the Royal Statistical Society. Series B (Methodological)

1331:

Journal of the Royal Statistical Society, Series B (Methodological)

126:

1371:. Wiley Series in Probability and Statistics (1 ed.). Wiley.

181:. Utilizing the posterior distribution, one can determine a 100γ%

4097:

3798:

1677:"Bayesian Interval Estimates which are also Confidence Intervals"

1824:

Statistical Intervals: A Guide for Practitioners and Researchers

1369:

Statistical Intervals: A Guide for Practitioners and Researchers

4019:

3000:

2974:

2954:

2205:

1996:

713:

is the critical value of the chi-square distribution utilizing

1399:

790:

is the critical values obtained from the normal distribution.

1848:

149:. If the underlying distribution is unknown, one can utilize

1939:

296:

115:

16:

Interval bounded by an upper and a lower limit statistics

1105:

1636:"Statistical Intervals, Not Statistical Significance"

1112:

Tolerance interval § Relation to other intervals

1081:

1054:

1023:

973:

907:

878:

851:

761:

739:

733:

degrees of freedom that is exceeded with probability

719:

675:

563:

534:

507:

457:

407:

331:

305:

197:

133:, confidence intervals can be approximated using the

3761:

Autoregressive conditional heteroskedasticity (ARCH)

1633:

1171:

955:{\displaystyle P(l_{b}<\Theta <u_{b})=\gamma }

52:

The most prevalent forms of interval estimation are

1822:Meeker, W.Q., Hahn, G.J. and Escobar, L.A. (2017).

153:to create bounds about the median of the data set.

3223:

1094:

1067:

1036:

1007:

954:

891:

864:

782:

745:

725:

705:

657:

547:

520:

492:

442:

385:

311:

227:

1366:

189:that an estimate is included within an interval.

173:assumption, modifying the assumption utilizing a

4176:

1481:

3309:Multivariate adaptive regression splines (MARS)

1523:Journal of the American Statistical Association

1484:Journal of the American Statistical Association

1461:(4. ed., 1. publ ed.). Chichester: Wiley.

104:

1864:

1772:Meeker, William Q.; Escobar, Luis A. (1998).

1771:

1595:Hahn, Gerald J.; Meeker, William Q. (1993).

386:{\displaystyle (l_{b},u_{b})=\mu \pm k_{2}s}

1844:https://www.youtube.com/watch?v=__0nZuG4sTw

1322:

1008:{\displaystyle P(l_{b}<\Theta )=\gamma }

1909:

1871:

1857:

1594:

832:

706:{\displaystyle \chi _{1-\alpha ,\nu }^{2}}

2522:

1651:

1433:

1283:

1248:

1774:Statistical methods for reliability data

1751:(2. ed., ed.). London: BMJ Books.

1674:

1555:

1328:

836:

160:

1597:"Assumptions for Statistical Inference"

109:

4177:

3835:Kaplan–Meier estimator (product limit)

1744:

1254:

3908:

3475:

3222:

2521:

2291:

1908:

1852:

1826:(2nd Edition). John Wiley & Sons.

1800:Kendall, M.G. and Stuart, A. (1973).

1406:Brazilian Journal of Physical Therapy

156:

4145:

3845:Accelerated failure time (AFT) model

1705:

1516:

1459:Bayesian statistics: an introduction

1362:

1360:

1106:Caution using and building estimates

249:

45:of interest. This is in contrast to

4157:

3440:Analysis of variance (ANOVA, anova)

2292:

1456:

1270:(767). The Royal Society: 333–380.

254:

13:

3535:Cochran–Mantel–Haenszel statistics

2161:Pearson product-moment correlation

1343:10.1111/j.2517-6161.1991.tb01849.x

993:

927:

244:highest posterior density interval

14:

4196:

1830:

1558:"What about the Other Intervals?"

1357:

493:{\displaystyle u_{b}=\mu +k_{1}s}

443:{\displaystyle l_{b}=\mu -k_{1}s}

4156:

4144:

4132:

4119:

4118:

3909:

1745:Altman, Douglas G., ed. (2011).

1653:10.1111/j.1740-9713.2019.01298.x

1174:

3794:Least-squares spectral analysis

1804:(3rd Edition). Griffin, London.

1794:

1765:

1738:

1699:

1668:

1627:

1158:

2775:Mean-unbiased minimum-variance

1878:

1588:

1549:

1510:

1475:

1450:

1393:

996:

977:

943:

911:

813:

621:

602:

358:

332:

49:, which gives a single value.

1:

4088:Geographic information system

3304:Simultaneous equations models

1556:Vardeman, Stephen B. (1992).

1496:10.1080/01621459.2016.1165102

1241:

793:

783:{\displaystyle z_{\alpha /2}}

72:). Less common forms include

3271:Coefficient of determination

2882:Uniformly most powerful test

1675:Severini, Thomas A. (1993).

286:

105:Types of interval estimation

7:

3840:Proportional hazards models

3784:Spectral density estimation

3766:Vector autoregression (VAR)

3200:Maximum posterior estimator

2432:Randomized controlled trial

1167:

555: can be expressed as

263:

10:

4201:

3600:Multivariate distributions

2020:Average absolute deviation

1418:10.1016/j.bjpt.2018.12.006

1109:

4114:

4068:

4005:

3958:

3921:

3917:

3904:

3876:

3858:

3825:

3816:

3774:

3721:

3682:

3631:

3622:

3588:Structural equation model

3543:

3500:

3496:

3471:

3430:

3396:

3350:

3317:

3279:

3246:

3242:

3218:

3158:

3067:

2986:

2950:

2941:

2924:Score/Lagrange multiplier

2909:

2862:

2807:

2733:

2724:

2534:

2530:

2517:

2476:

2450:

2402:

2357:

2339:Sample size determination

2304:

2300:

2287:

2191:

2146:

2120:

2102:

2058:

2010:

1930:

1921:

1917:

1904:

1886:

1836:Fuzzy Math Introductions

1712:The American Statistician

1601:The American Statistician

1562:The American Statistician

1517:Howe, W. G. (June 1969).

275:is a less common form of

4083:Environmental statistics

3605:Elliptical distributions

3398:Generalized linear model

3327:Simple linear regression

3097:Hodges–Lehmann estimator

2554:Probability distribution

2463:Stochastic approximation

2025:Coefficient of variation

1225:Philosophy of statistics

396:for two-sided intervals

393:for two-sided intervals

143:Clopper-Pearson interval

41:of possible values of a

3743:Cross-correlation (XCF)

3351:Non-standard predictors

2785:Lehmann–Scheffé theorem

2458:Adaptive clinical trial

833:One-sided vs. two-sided

823:fuzzy inference systems

746:{\displaystyle \alpha }

135:Wald Approximate Method

4139:Mathematics portal

3960:Engineering statistics

3868:Nelson–Aalen estimator

3445:Analysis of covariance

3332:Ordinary least squares

3256:Pearson product-moment

2660:Statistical functional

2571:Empirical distribution

2404:Controlled experiments

2133:Frequency distribution

1911:Descriptive statistics

1457:Lee, Peter M. (2012).

1285:10.1098/rsta.1937.0005

1235:Behrens–Fisher problem

1210:Induction (philosophy)

1142:coverage probabilities

1096:

1069:

1038:

1009:

956:

893:

866:

842:

784:

747:

727:

707:

659:

549:

522:

494:

444:

387:

313:

229:

179:posterior distribution

166:

4185:Statistical intervals

4055:Population statistics

3997:System identification

3731:Autocorrelation (ACF)

3659:Exponential smoothing

3573:Discriminant analysis

3568:Canonical correlation

3432:Partition of variance

3294:Regression validation

3138:(Jonckheere–Terpstra)

3037:Likelihood-ratio test

2726:Frequentist inference

2638:Location–scale family

2559:Sampling distribution

2524:Statistical inference

2491:Cross-sectional study

2478:Observational studies

2437:Randomized experiment

2266:Stem-and-leaf display

2068:Central limit theorem

1842:What is Fuzzy Logic?

1377:10.1002/9781118594841

1337:(3). Wiley: 611–618.

1205:Estimation statistics

1195:Algorithmic inference

1131:non-parametric models

1097:

1095:{\displaystyle u_{b}}

1070:

1068:{\displaystyle l_{b}}

1039:

1037:{\displaystyle l_{b}}

1010:

957:

894:

892:{\displaystyle u_{b}}

867:

865:{\displaystyle l_{b}}

840:

785:

748:

728:

708:

660:

550:

548:{\displaystyle k_{2}}

523:

521:{\displaystyle k_{i}}

495:

445:

388:

314:

277:statistical inference

230:

164:

131:Binomial distribution

3978:Probabilistic design

3563:Principal components

3406:Exponential families

3358:Nonlinear regression

3337:General linear model

3299:Mixed effects models

3289:Errors and residuals

3266:Confounding variable

3168:Bayesian probability

3146:Van der Waerden test

3136:Ordered alternative

2901:Multiple comparisons

2780:Rao–Blackwellization

2743:Estimating equations

2699:Statistical distance

2417:Factorial experiment

1950:Arithmetic-Geometric

1230:Predictive inference

1220:Multiple comparisons

1200:Coverage probability

1079:

1052:

1021:

971:

905:

876:

849:

827:membership functions

759:

737:

726:{\displaystyle \nu }

717:

673:

561:

532:

505:

455:

405:

329:

312:{\displaystyle \mu }

303:

195:

177:, and determining a

147:Poisson distribution

110:Confidence intervals

94:prediction intervals

75:likelihood intervals

55:confidence intervals

4050:Official statistics

3973:Methods engineering

3654:Seasonal adjustment

3422:Poisson regressions

3342:Bayesian regression

3281:Regression analysis

3261:Partial correlation

3233:Regression analysis

2832:Prediction interval

2827:Likelihood interval

2817:Confidence interval

2809:Interval estimation

2770:Unbiased estimators

2588:Model specification

2468:Up-and-down designs

2156:Partial correlation

2112:Index of dispersion

2030:Interquartile range

1276:1937RSPTA.236..333N

872:) and upper bound (

800:prediction interval

702:

651:

292:Tolerance intervals

87:tolerance intervals

25:interval estimation

4070:Spatial statistics

3950:Medical statistics

3850:First hitting time

3804:Whittle likelihood

3455:Degrees of freedom

3450:Multivariate ANOVA

3383:Heteroscedasticity

3195:Bayesian estimator

3160:Bayesian inference

3009:Kolmogorov–Smirnov

2894:Randomization test

2864:Testing hypotheses

2837:Tolerance interval

2748:Maximum likelihood

2643:Exponential family

2576:Density estimation

2536:Statistical theory

2496:Natural experiment

2442:Scientific control

2359:Survey methodology

2045:Standard deviation

1813:Bayesian intervals

1706:Katz, Leo (1975).

1490:(515): 1346–1361.

1182:Mathematics portal

1092:

1065:

1034:

1005:

952:

889:

862:

843:

780:

743:

723:

703:

676:

655:

625:

545:

518:

490:

440:

383:

321:standard deviation

309:

273:Fiducial inference

225:

167:

157:Credible intervals

120:standard deviation

81:fiducial intervals

65:credible intervals

4172:

4171:

4110:

4109:

4106:

4105:

4045:National accounts

4015:Actuarial science

4007:Social statistics

3900:

3899:

3896:

3895:

3892:

3891:

3827:Survival function

3812:

3811:

3674:Granger causality

3515:Contingency table

3490:Survival analysis

3467:

3466:

3463:

3462:

3319:Linear regression

3214:

3213:

3210:

3209:

3185:Credible interval

3154:

3153:

2937:

2936:

2753:Method of moments

2622:Parametric family

2583:Statistical model

2513:

2512:

2509:

2508:

2427:Random assignment

2349:Statistical power

2283:

2282:

2279:

2278:

2128:Contingency table

2098:

2097:

1965:Generalized/power

1783:978-0-471-14328-4

1758:978-0-7279-1375-3

1468:978-1-118-33257-3

1386:978-0-471-68717-7

1046:quality assurance

653:

652:

619:

319:, and the sample

250:Less common forms

223:

215:

211:

205:

201:

139:Jeffreys interval

4192:

4160:

4159:

4148:

4147:

4137:

4136:

4122:

4121:

4025:Crime statistics

3919:

3918:

3906:

3905:

3823:

3822:

3789:Fourier analysis

3776:Frequency domain

3756:

3703:

3669:Structural break

3629:

3628:

3578:Cluster analysis

3525:Log-linear model

3498:

3497:

3473:

3472:

3414:

3388:Homoscedasticity

3244:

3243:

3220:

3219:

3139:

3131:

3123:

3122:(Kruskal–Wallis)

3107:

3092:

3047:Cross validation

3032:

3014:Anderson–Darling

2961:

2948:

2947:

2919:Likelihood-ratio

2911:Parametric tests

2889:Permutation test

2872:1- & 2-tails

2763:Minimum distance

2735:Point estimation

2731:

2730:

2682:Optimal decision

2633:

2532:

2531:

2519:

2518:

2501:Quasi-experiment

2451:Adaptive designs

2302:

2301:

2289:

2288:

2166:Rank correlation

1928:

1927:

1919:

1918:

1906:

1905:

1873:

1866:

1859:

1850:

1849:

1788:

1787:

1769:

1763:

1762:

1742:

1736:

1735:

1703:

1697:

1696:

1672:

1666:

1665:

1655:

1631:

1625:

1624:

1592:

1586:

1585:

1553:

1547:

1546:

1514:

1508:

1507:

1479:

1473:

1472:

1454:

1448:

1447:

1437:

1397:

1391:

1390:

1364:

1355:

1354:

1326:

1320:

1319:

1317:

1316:

1287:

1252:

1184:

1179:

1178:

1119:statistical bias

1101:

1099:

1098:

1093:

1091:

1090:

1074:

1072:

1071:

1066:

1064:

1063:

1043:

1041:

1040:

1035:

1033:

1032:

1014:

1012:

1011:

1006:

989:

988:

961:

959:

958:

953:

942:

941:

923:

922:

898:

896:

895:

890:

888:

887:

871:

869:

868:

863:

861:

860:

789:

787:

786:

781:

779:

778:

774:

752:

750:

749:

744:

732:

730:

729:

724:

712:

710:

709:

704:

701:

696:

664:

662:

661:

656:

654:

650:

645:

624:

620:

612:

597:

596:

594:

593:

589:

573:

572:

554:

552:

551:

546:

544:

543:

527:

525:

524:

519:

517:

516:

499:

497:

496:

491:

486:

485:

467:

466:

449:

447:

446:

441:

436:

435:

417:

416:

392:

390:

389:

384:

379:

378:

357:

356:

344:

343:

318:

316:

315:

310:

255:Likelihood-based

234:

232:

231:

226:

224:

221:

216:

213:

209:

203:

202:

199:

47:point estimation

4200:

4199:

4195:

4194:

4193:

4191:

4190:

4189:

4175:

4174:

4173:

4168:

4131:

4102:

4064:

4001:

3987:quality control

3954:

3936:Clinical trials

3913:

3888:

3872:

3860:Hazard function

3854:

3808:

3770:

3754:

3717:

3713:Breusch–Godfrey

3701:

3678:

3618:

3593:Factor analysis

3539:

3520:Graphical model

3492:

3459:

3426:

3412:

3392:

3346:

3313:

3275:

3238:

3237:

3206:

3150:

3137:

3129:

3121:

3105:

3090:

3069:Rank statistics

3063:

3042:Model selection

3030:

2988:Goodness of fit

2982:

2959:

2933:

2905:

2858:

2803:

2792:Median unbiased

2720:

2631:

2564:Order statistic

2526:

2505:

2472:

2446:

2398:

2353:

2296:

2294:Data collection

2275:

2187:

2142:

2116:

2094:

2054:

2006:

1923:Continuous data

1913:

1900:

1882:

1877:

1833:

1797:

1792:

1791:

1784:

1770:

1766:

1759:

1743:

1739:

1724:10.2307/2683480

1704:

1700:

1673:

1669:

1632:

1628:

1613:10.2307/2684774

1593:

1589:

1574:10.2307/2685212

1554:

1550:

1535:10.2307/2283644

1515:

1511:

1480:

1476:

1469:

1455:

1451:

1398:

1394:

1387:

1365:

1358:

1327:

1323:

1314:

1312:

1253:

1249:

1244:

1215:Margin of error

1190:68–95–99.7 rule

1180:

1173:

1170:

1161:

1149:decision theory

1114:

1108:

1086:

1082:

1080:

1077:

1076:

1059:

1055:

1053:

1050:

1049:

1028:

1024:

1022:

1019:

1018:

984:

980:

972:

969:

968:

937:

933:

918:

914:

906:

903:

902:

883:

879:

877:

874:

873:

856:

852:

850:

847:

846:

835:

816:

796:

770:

766:

762:

760:

757:

756:

738:

735:

734:

718:

715:

714:

697:

680:

674:

671:

670:

646:

629:

611:

598:

595:

585:

581:

577:

568:

564:

562:

559:

558:

539:

535:

533:

530:

529:

512:

508:

506:

503:

502:

481:

477:

462:

458:

456:

453:

452:

431:

427:

412:

408:

406:

403:

402:

374:

370:

352:

348:

339:

335:

330:

327:

326:

304:

301:

300:

289:

279:. The founder,

266:

257:

252:

220:

212:

198:

196:

193:

192:

159:

112:

107:

70:Bayesian method

17:

12:

11:

5:

4198:

4188:

4187:

4170:

4169:

4167:

4166:

4154:

4142:

4128:

4115:

4112:

4111:

4108:

4107:

4104:

4103:

4101:

4100:

4095:

4090:

4085:

4080:

4074:

4072:

4066:

4065:

4063:

4062:

4057:

4052:

4047:

4042:

4037:

4032:

4027:

4022:

4017:

4011:

4009:

4003:

4002:

4000:

3999:

3994:

3989:

3980:

3975:

3970:

3964:

3962:

3956:

3955:

3953:

3952:

3947:

3942:

3933:

3931:Bioinformatics

3927:

3925:

3915:

3914:

3902:

3901:

3898:

3897:

3894:

3893:

3890:

3889:

3887:

3886:

3880:

3878:

3874:

3873:

3871:

3870:

3864:

3862:

3856:

3855:

3853:

3852:

3847:

3842:

3837:

3831:

3829:

3820:

3814:

3813:

3810:

3809:

3807:

3806:

3801:

3796:

3791:

3786:

3780:

3778:

3772:

3771:

3769:

3768:

3763:

3758:

3750:

3745:

3740:

3739:

3738:

3736:partial (PACF)

3727:

3725:

3719:

3718:

3716:

3715:

3710:

3705:

3697:

3692:

3686:

3684:

3683:Specific tests

3680:

3679:

3677:

3676:

3671:

3666:

3661:

3656:

3651:

3646:

3641:

3635:

3633:

3626:

3620:

3619:

3617:

3616:

3615:

3614:

3613:

3612:

3597:

3596:

3595:

3585:

3583:Classification

3580:

3575:

3570:

3565:

3560:

3555:

3549:

3547:

3541:

3540:

3538:

3537:

3532:

3530:McNemar's test

3527:

3522:

3517:

3512:

3506:

3504:

3494:

3493:

3469:

3468:

3465:

3464:

3461:

3460:

3458:

3457:

3452:

3447:

3442:

3436:

3434:

3428:

3427:

3425:

3424:

3408:

3402:

3400:

3394:

3393:

3391:

3390:

3385:

3380:

3375:

3370:

3368:Semiparametric

3365:

3360:

3354:

3352:

3348:

3347:

3345:

3344:

3339:

3334:

3329:

3323:

3321:

3315:

3314:

3312:

3311:

3306:

3301:

3296:

3291:

3285:

3283:

3277:

3276:

3274:

3273:

3268:

3263:

3258:

3252:

3250:

3240:

3239:

3236:

3235:

3230:

3224:

3216:

3215:

3212:

3211:

3208:

3207:

3205:

3204:

3203:

3202:

3192:

3187:

3182:

3181:

3180:

3175:

3164:

3162:

3156:

3155:

3152:

3151:

3149:

3148:

3143:

3142:

3141:

3133:

3125:

3109:

3106:(Mann–Whitney)

3101:

3100:

3099:

3086:

3085:

3084:

3073:

3071:

3065:

3064:

3062:

3061:

3060:

3059:

3054:

3049:

3039:

3034:

3031:(Shapiro–Wilk)

3026:

3021:

3016:

3011:

3006:

2998:

2992:

2990:

2984:

2983:

2981:

2980:

2972:

2963:

2951:

2945:

2943:Specific tests

2939:

2938:

2935:

2934:

2932:

2931:

2926:

2921:

2915:

2913:

2907:

2906:

2904:

2903:

2898:

2897:

2896:

2886:

2885:

2884:

2874:

2868:

2866:

2860:

2859:

2857:

2856:

2855:

2854:

2849:

2839:

2834:

2829:

2824:

2819:

2813:

2811:

2805:

2804:

2802:

2801:

2796:

2795:

2794:

2789:

2788:

2787:

2782:

2767:

2766:

2765:

2760:

2755:

2750:

2739:

2737:

2728:

2722:

2721:

2719:

2718:

2713:

2708:

2707:

2706:

2696:

2691:

2690:

2689:

2679:

2678:

2677:

2672:

2667:

2657:

2652:

2647:

2646:

2645:

2640:

2635:

2619:

2618:

2617:

2612:

2607:

2597:

2596:

2595:

2590:

2580:

2579:

2578:

2568:

2567:

2566:

2556:

2551:

2546:

2540:

2538:

2528:

2527:

2515:

2514:

2511:

2510:

2507:

2506:

2504:

2503:

2498:

2493:

2488:

2482:

2480:

2474:

2473:

2471:

2470:

2465:

2460:

2454:

2452:

2448:

2447:

2445:

2444:

2439:

2434:

2429:

2424:

2419:

2414:

2408:

2406:

2400:

2399:

2397:

2396:

2394:Standard error

2391:

2386:

2381:

2380:

2379:

2374:

2363:

2361:

2355:

2354:

2352:

2351:

2346:

2341:

2336:

2331:

2326:

2324:Optimal design

2321:

2316:

2310:

2308:

2298:

2297:

2285:

2284:

2281:

2280:

2277:

2276:

2274:

2273:

2268:

2263:

2258:

2253:

2248:

2243:

2238:

2233:

2228:

2223:

2218:

2213:

2208:

2203:

2197:

2195:

2189:

2188:

2186:

2185:

2180:

2179:

2178:

2173:

2163:

2158:

2152:

2150:

2144:

2143:

2141:

2140:

2135:

2130:

2124:

2122:

2121:Summary tables

2118:

2117:

2115:

2114:

2108:

2106:

2100:

2099:

2096:

2095:

2093:

2092:

2091:

2090:

2085:

2080:

2070:

2064:

2062:

2056:

2055:

2053:

2052:

2047:

2042:

2037:

2032:

2027:

2022:

2016:

2014:

2008:

2007:

2005:

2004:

1999:

1994:

1993:

1992:

1987:

1982:

1977:

1972:

1967:

1962:

1957:

1955:Contraharmonic

1952:

1947:

1936:

1934:

1925:

1915:

1914:

1902:

1901:

1899:

1898:

1893:

1887:

1884:

1883:

1876:

1875:

1868:

1861:

1853:

1847:

1846:

1840:

1832:

1831:External links

1829:

1828:

1827:

1819:

1818:

1817:

1816:

1806:

1805:

1796:

1793:

1790:

1789:

1782:

1764:

1757:

1737:

1718:(4): 138–142.

1698:

1687:(2): 533–540.

1667:

1626:

1587:

1568:(3): 193–197.

1548:

1509:

1474:

1467:

1449:

1412:(4): 290–301.

1392:

1385:

1356:

1321:

1246:

1245:

1243:

1240:

1239:

1238:

1232:

1227:

1222:

1217:

1212:

1207:

1202:

1197:

1192:

1186:

1185:

1169:

1166:

1160:

1157:

1107:

1104:

1089:

1085:

1062:

1058:

1031:

1027:

1004:

1001:

998:

995:

992:

987:

983:

979:

976:

951:

948:

945:

940:

936:

932:

929:

926:

921:

917:

913:

910:

886:

882:

859:

855:

834:

831:

815:

812:

795:

792:

777:

773:

769:

765:

742:

722:

700:

695:

692:

689:

686:

683:

679:

649:

644:

641:

638:

635:

632:

628:

623:

618:

615:

610:

607:

604:

601:

592:

588:

584:

580:

576:

571:

567:

542:

538:

515:

511:

489:

484:

480:

476:

473:

470:

465:

461:

439:

434:

430:

426:

423:

420:

415:

411:

382:

377:

373:

369:

366:

363:

360:

355:

351:

347:

342:

338:

334:

308:

288:

285:

265:

262:

256:

253:

251:

248:

219:

208:

158:

155:

111:

108:

106:

103:

27:is the use of

15:

9:

6:

4:

3:

2:

4197:

4186:

4183:

4182:

4180:

4165:

4164:

4155:

4153:

4152:

4143:

4141:

4140:

4135:

4129:

4127:

4126:

4117:

4116:

4113:

4099:

4096:

4094:

4093:Geostatistics

4091:

4089:

4086:

4084:

4081:

4079:

4076:

4075:

4073:

4071:

4067:

4061:

4060:Psychometrics

4058:

4056:

4053:

4051:

4048:

4046:

4043:

4041:

4038:

4036:

4033:

4031:

4028:

4026:

4023:

4021:

4018:

4016:

4013:

4012:

4010:

4008:

4004:

3998:

3995:

3993:

3990:

3988:

3984:

3981:

3979:

3976:

3974:

3971:

3969:

3966:

3965:

3963:

3961:

3957:

3951:

3948:

3946:

3943:

3941:

3937:

3934:

3932:

3929:

3928:

3926:

3924:

3923:Biostatistics

3920:

3916:

3912:

3907:

3903:

3885:

3884:Log-rank test

3882:

3881:

3879:

3875:

3869:

3866:

3865:

3863:

3861:

3857:

3851:

3848:

3846:

3843:

3841:

3838:

3836:

3833:

3832:

3830:

3828:

3824:

3821:

3819:

3815:

3805:

3802:

3800:

3797:

3795:

3792:

3790:

3787:

3785:

3782:

3781:

3779:

3777:

3773:

3767:

3764:

3762:

3759:

3757:

3755:(Box–Jenkins)

3751:

3749:

3746:

3744:

3741:

3737:

3734:

3733:

3732:

3729:

3728:

3726:

3724:

3720:

3714:

3711:

3709:

3708:Durbin–Watson

3706:

3704:

3698:

3696:

3693:

3691:

3690:Dickey–Fuller

3688:

3687:

3685:

3681:

3675:

3672:

3670:

3667:

3665:

3664:Cointegration

3662:

3660:

3657:

3655:

3652:

3650:

3647:

3645:

3642:

3640:

3639:Decomposition

3637:

3636:

3634:

3630:

3627:

3625:

3621:

3611:

3608:

3607:

3606:

3603:

3602:

3601:

3598:

3594:

3591:

3590:

3589:

3586:

3584:

3581:

3579:

3576:

3574:

3571:

3569:

3566:

3564:

3561:

3559:

3556:

3554:

3551:

3550:

3548:

3546:

3542:

3536:

3533:

3531:

3528:

3526:

3523:

3521:

3518:

3516:

3513:

3511:

3510:Cohen's kappa

3508:

3507:

3505:

3503:

3499:

3495:

3491:

3487:

3483:

3479:

3474:

3470:

3456:

3453:

3451:

3448:

3446:

3443:

3441:

3438:

3437:

3435:

3433:

3429:

3423:

3419:

3415:

3409:

3407:

3404:

3403:

3401:

3399:

3395:

3389:

3386:

3384:

3381:

3379:

3376:

3374:

3371:

3369:

3366:

3364:

3363:Nonparametric

3361:

3359:

3356:

3355:

3353:

3349:

3343:

3340:

3338:

3335:

3333:

3330:

3328:

3325:

3324:

3322:

3320:

3316:

3310:

3307:

3305:

3302:

3300:

3297:

3295:

3292:

3290:

3287:

3286:

3284:

3282:

3278:

3272:

3269:

3267:

3264:

3262:

3259:

3257:

3254:

3253:

3251:

3249:

3245:

3241:

3234:

3231:

3229:

3226:

3225:

3221:

3217:

3201:

3198:

3197:

3196:

3193:

3191:

3188:

3186:

3183:

3179:

3176:

3174:

3171:

3170:

3169:

3166:

3165:

3163:

3161:

3157:

3147:

3144:

3140:

3134:

3132:

3126:

3124:

3118:

3117:

3116:

3113:

3112:Nonparametric

3110:

3108:

3102:

3098:

3095:

3094:

3093:

3087:

3083:

3082:Sample median

3080:

3079:

3078:

3075:

3074:

3072:

3070:

3066:

3058:

3055:

3053:

3050:

3048:

3045:

3044:

3043:

3040:

3038:

3035:

3033:

3027:

3025:

3022:

3020:

3017:

3015:

3012:

3010:

3007:

3005:

3003:

2999:

2997:

2994:

2993:

2991:

2989:

2985:

2979:

2977:

2973:

2971:

2969:

2964:

2962:

2957:

2953:

2952:

2949:

2946:

2944:

2940:

2930:

2927:

2925:

2922:

2920:

2917:

2916:

2914:

2912:

2908:

2902:

2899:

2895:

2892:

2891:

2890:

2887:

2883:

2880:

2879:

2878:

2875:

2873:

2870:

2869:

2867:

2865:

2861:

2853:

2850:

2848:

2845:

2844:

2843:

2840:

2838:

2835:

2833:

2830:

2828:

2825:

2823:

2820:

2818:

2815:

2814:

2812:

2810:

2806:

2800:

2797:

2793:

2790:

2786:

2783:

2781:

2778:

2777:

2776:

2773:

2772:

2771:

2768:

2764:

2761:

2759:

2756:

2754:

2751:

2749:

2746:

2745:

2744:

2741:

2740:

2738:

2736:

2732:

2729:

2727:

2723:

2717:

2714:

2712:

2709:

2705:

2702:

2701:

2700:

2697:

2695:

2692:

2688:

2687:loss function

2685:

2684:

2683:

2680:

2676:

2673:

2671:

2668:

2666:

2663:

2662:

2661:

2658:

2656:

2653:

2651:

2648:

2644:

2641:

2639:

2636:

2634:

2628:

2625:

2624:

2623:

2620:

2616:

2613:

2611:

2608:

2606:

2603:

2602:

2601:

2598:

2594:

2591:

2589:

2586:

2585:

2584:

2581:

2577:

2574:

2573:

2572:

2569:

2565:

2562:

2561:

2560:

2557:

2555:

2552:

2550:

2547:

2545:

2542:

2541:

2539:

2537:

2533:

2529:

2525:

2520:

2516:

2502:

2499:

2497:

2494:

2492:

2489:

2487:

2484:

2483:

2481:

2479:

2475:

2469:

2466:

2464:

2461:

2459:

2456:

2455:

2453:

2449:

2443:

2440:

2438:

2435:

2433:

2430:

2428:

2425:

2423:

2420:

2418:

2415:

2413:

2410:

2409:

2407:

2405:

2401:

2395:

2392:

2390:

2389:Questionnaire

2387:

2385:

2382:

2378:

2375:

2373:

2370:

2369:

2368:

2365:

2364:

2362:

2360:

2356:

2350:

2347:

2345:

2342:

2340:

2337:

2335:

2332:

2330:

2327:

2325:

2322:

2320:

2317:

2315:

2312:

2311:

2309:

2307:

2303:

2299:

2295:

2290:

2286:

2272:

2269:

2267:

2264:

2262:

2259:

2257:

2254:

2252:

2249:

2247:

2244:

2242:

2239:

2237:

2234:

2232:

2229:

2227:

2224:

2222:

2219:

2217:

2216:Control chart

2214:

2212:

2209:

2207:

2204:

2202:

2199:

2198:

2196:

2194:

2190:

2184:

2181:

2177:

2174:

2172:

2169:

2168:

2167:

2164:

2162:

2159:

2157:

2154:

2153:

2151:

2149:

2145:

2139:

2136:

2134:

2131:

2129:

2126:

2125:

2123:

2119:

2113:

2110:

2109:

2107:

2105:

2101:

2089:

2086:

2084:

2081:

2079:

2076:

2075:

2074:

2071:

2069:

2066:

2065:

2063:

2061:

2057:

2051:

2048:

2046:

2043:

2041:

2038:

2036:

2033:

2031:

2028:

2026:

2023:

2021:

2018:

2017:

2015:

2013:

2009:

2003:

2000:

1998:

1995:

1991:

1988:

1986:

1983:

1981:

1978:

1976:

1973:

1971:

1968:

1966:

1963:

1961:

1958:

1956:

1953:

1951:

1948:

1946:

1943:

1942:

1941:

1938:

1937:

1935:

1933:

1929:

1926:

1924:

1920:

1916:

1912:

1907:

1903:

1897:

1894:

1892:

1889:

1888:

1885:

1881:

1874:

1869:

1867:

1862:

1860:

1855:

1854:

1851:

1845:

1841:

1839:

1835:

1834:

1825:

1821:

1820:

1814:

1810:

1809:

1808:

1807:

1803:

1799:

1798:

1785:

1779:

1775:

1768:

1760:

1754:

1750:

1749:

1741:

1733:

1729:

1725:

1721:

1717:

1713:

1709:

1702:

1694:

1690:

1686:

1682:

1678:

1671:

1663:

1659:

1654:

1649:

1645:

1641:

1637:

1630:

1622:

1618:

1614:

1610:

1606:

1602:

1598:

1591:

1583:

1579:

1575:

1571:

1567:

1563:

1559:

1552:

1544:

1540:

1536:

1532:

1528:

1524:

1520:

1513:

1505:

1501:

1497:

1493:

1489:

1485:

1478:

1470:

1464:

1460:

1453:

1445:

1441:

1436:

1431:

1427:

1423:

1419:

1415:

1411:

1407:

1403:

1396:

1388:

1382:

1378:

1374:

1370:

1363:

1361:

1352:

1348:

1344:

1340:

1336:

1332:

1325:

1311:

1307:

1303:

1299:

1295:

1291:

1286:

1281:

1277:

1273:

1269:

1265:

1261:

1257:

1251:

1247:

1236:

1233:

1231:

1228:

1226:

1223:

1221:

1218:

1216:

1213:

1211:

1208:

1206:

1203:

1201:

1198:

1196:

1193:

1191:

1188:

1187:

1183:

1177:

1172:

1165:

1156:

1154:

1150:

1145:

1143:

1138:

1134:

1132:

1126:

1122:

1120:

1113:

1103:

1087:

1083:

1060:

1056:

1047:

1029:

1025:

1015:

1002:

999:

990:

985:

981:

974:

966:

962:

949:

946:

938:

934:

930:

924:

919:

915:

908:

900:

884:

880:

857:

853:

839:

830:

828:

824:

820:

811:

809:

805:

801:

791:

775:

771:

767:

763:

754:

740:

720:

698:

693:

690:

687:

684:

681:

677:

668:

665:

647:

642:

639:

636:

633:

630:

626:

616:

613:

608:

605:

599:

590:

586:

582:

578:

574:

569:

565:

556:

540:

536:

513:

509:

500:

487:

482:

478:

474:

471:

468:

463:

459:

450:

437:

432:

428:

424:

421:

418:

413:

409:

400:

397:

394:

380:

375:

371:

367:

364:

361:

353:

349:

345:

340:

336:

324:

322:

306:

298:

293:

284:

282:

278:

274:

270:

261:

247:

245:

239:

235:

217:

206:

190:

188:

184:

180:

176:

172:

163:

154:

152:

151:bootstrapping

148:

144:

140:

136:

132:

128:

123:

121:

117:

102:

100:

96:

95:

90:

88:

84:

82:

78:

76:

71:

67:

66:

62:method) and

61:

57:

56:

50:

48:

44:

40:

39:

34:

30:

26:

22:

4161:

4149:

4130:

4123:

4035:Econometrics

3985: /

3968:Chemometrics

3945:Epidemiology

3938: /

3911:Applications

3753:ARIMA model

3700:Q-statistic

3649:Stationarity

3545:Multivariate

3488: /

3484: /

3482:Multivariate

3480: /

3420: /

3416: /

3190:Bayes factor

3089:Signed rank

3001:

2975:

2967:

2955:

2808:

2650:Completeness

2486:Cohort study

2384:Opinion poll

2319:Missing data

2306:Study design

2261:Scatter plot

2183:Scatter plot

2176:Spearman's ρ

2138:Grouped data

1823:

1801:

1795:Bibliography

1773:

1767:

1747:

1740:

1715:

1711:

1701:

1684:

1680:

1670:

1646:(4): 20–22.

1643:

1640:Significance

1639:

1629:

1604:

1600:

1590:

1565:

1561:

1551:

1529:(326): 610.

1526:

1522:

1512:

1487:

1483:

1477:

1458:

1452:

1409:

1405:

1395:

1368:

1334:

1330:

1324:

1313:. Retrieved

1267:

1263:

1250:

1162:

1159:Applications

1153:Bayes action

1146:

1139:

1135:

1127:

1123:

1115:

1016:

967:

963:

901:

844:

817:

797:

755:

669:

666:

557:

501:

451:

401:

398:

395:

325:

290:

271:

267:

258:

240:

236:

191:

186:

182:

175:Bayes factor

168:

124:

113:

92:

85:

79:

73:

63:

53:

51:

36:

24:

18:

4163:WikiProject

4078:Cartography

4040:Jurimetrics

3992:Reliability

3723:Time domain

3702:(Ljung–Box)

3624:Time-series

3502:Categorical

3486:Time-series

3478:Categorical

3413:(Bernoulli)

3248:Correlation

3228:Correlation

3024:Jarque–Bera

2996:Chi-squared

2758:M-estimator

2711:Asymptotics

2655:Sufficiency

2422:Interaction

2334:Replication

2314:Effect size

2271:Violin plot

2251:Radar chart

2231:Forest plot

2221:Correlogram

2171:Kendall's τ

1607:(1): 1–11.

819:Fuzzy logic

814:Fuzzy logic

808:frequentist

281:R.A. Fisher

183:probability

99:fuzzy logic

60:frequentist

29:sample data

4030:Demography

3748:ARMA model

3553:Regression

3130:(Friedman)

3091:(Wilcoxon)

3029:Normality

3019:Lilliefors

2966:Student's

2842:Resampling

2716:Robustness

2704:divergence

2694:Efficiency

2632:(monotone)

2627:Likelihood

2544:Population

2377:Stratified

2329:Population

2148:Dependence

2104:Count data

2035:Percentile

2012:Dispersion

1945:Arithmetic

1880:Statistics

1315:2021-07-15

1256:Neyman, J.

1242:References

1110:See also:

794:Prediction

214:Likelihood

21:statistics

3411:Logistic

3178:posterior

3104:Rank sum

2852:Jackknife

2847:Bootstrap

2665:Bootstrap

2600:Parameter

2549:Statistic

2344:Statistic

2256:Run chart

2241:Pie chart

2236:Histogram

2226:Fan chart

2201:Bar chart

2083:L-moments

1970:Geometric

1732:0003-1305

1693:0035-9246

1662:1740-9705

1621:0003-1305

1582:0003-1305

1543:0162-1459

1504:0162-1459

1426:1413-3555

1351:0035-9246

1294:0080-4614

1003:γ

994:Θ

950:γ

928:Θ

768:α

741:α

721:ν

694:ν

688:α

685:−

678:χ

643:ν

637:α

634:−

627:χ

600:ν

583:α

472:μ

425:−

422:μ

368:±

365:μ

307:μ

287:Tolerance

218:×

207:∝

200:Posterior

187:confident

43:parameter

4179:Category

4125:Category

3818:Survival

3695:Johansen

3418:Binomial

3373:Isotonic

2960:(normal)

2605:location

2412:Blocking

2367:Sampling

2246:Q–Q plot

2211:Box plot

2193:Graphics

2088:Skewness

2078:Kurtosis

2050:Variance

1980:Heronian

1975:Harmonic

1444:30638956

1310:19584450

1258:(1937).

1168:See also

804:Bayesian

264:Fiducial

127:variance

38:interval

33:estimate

4151:Commons

4098:Kriging

3983:Process

3940:studies

3799:Wavelet

3632:General

2799:Plug-in

2593:L space

2372:Cluster

2073:Moments

1891:Outline

1435:6630113

1272:Bibcode

667:Where,

4020:Census

3610:Normal

3558:Manova

3378:Robust

3128:2-way

3120:1-way

2958:-test

2629:

2206:Biplot

1997:Median

1990:Lehmer

1932:Center

1780:

1755:

1730:

1691:

1660:

1619:

1580:

1541:

1502:

1465:

1442:

1432:

1424:

1383:

1349:

1308:

1300:

1292:

210:

204:

141:, and

3644:Trend

3173:prior

3115:anova

3004:-test

2978:-test

2970:-test

2877:Power

2822:Pivot

2615:shape

2610:scale

2060:Shape

2040:Range

1985:Heinz

1960:Cubic

1896:Index

1306:S2CID

1302:91337

1298:JSTOR

323:, s.

222:Prior

171:prior

3877:Test

3077:Sign

2929:Wald

2002:Mode

1940:Mean

1778:ISBN

1753:ISBN

1728:ISSN

1689:ISSN

1658:ISSN

1617:ISSN

1578:ISSN

1539:ISSN

1500:ISSN

1463:ISBN

1440:PMID

1422:ISSN

1381:ISBN

1347:ISSN

1290:ISSN

991:<

931:<

925:<

806:and

297:mean

116:mean

91:and

3057:BIC

3052:AIC

1720:doi

1648:doi

1609:doi

1570:doi

1531:doi

1492:doi

1488:111

1430:PMC

1414:doi

1373:doi

1339:doi

1280:doi

1268:236

1147:In

1102:).

118:or

68:(a

58:(a

35:an

31:to

19:In

4181::

1726:.

1716:29

1714:.

1710:.

1685:55

1683:.

1679:.

1656:.

1644:16

1642:.

1638:.

1615:.

1605:47

1603:.

1599:.

1576:.

1566:46

1564:.

1560:.

1537:.

1527:64

1525:.

1521:.

1498:.

1486:.

1438:.

1428:.

1420:.

1410:23

1408:.

1404:.

1379:.

1359:^

1345:.

1335:53

1333:.

1304:.

1296:.

1288:.

1278:.

1266:.

1262:.

1133:.

798:A

753:.

299:,

137:,

101:.

23:,

3002:G

2976:F

2968:t

2956:Z

2675:V

2670:U

1872:e

1865:t

1858:v

1786:.

1761:.

1734:.

1722::

1695:.

1664:.

1650::

1623:.

1611::

1584:.

1572::

1545:.

1533::

1506:.

1494::

1471:.

1446:.

1416::

1389:.

1375::

1353:.

1341::

1318:.

1282::

1274::

1088:b

1084:u

1061:b

1057:l

1030:b

1026:l

1000:=

997:)

986:b

982:l

978:(

975:P

947:=

944:)

939:b

935:u

920:b

916:l

912:(

909:P

885:b

881:u

858:b

854:l

776:2

772:/

764:z

699:2

691:,

682:1

648:2

640:,

631:1

622:)

617:N

614:1

609:+

606:1

603:(

591:2

587:/

579:z

575:=

570:2

566:k

541:2

537:k

514:i

510:k

488:s

483:1

479:k

475:+

469:=

464:b

460:u

438:s

433:1

429:k

419:=

414:b

410:l

381:s

376:2

372:k

362:=

359:)

354:b

350:u

346:,

341:b

337:l

333:(

89:,

83:,

77:,

Text is available under the Creative Commons Attribution-ShareAlike License. Additional terms may apply.